Create SIEM rules that detect real threats reliably by starting with a sharp detection objective then iterating with data driven tuning and contextual enrichment. Effective SIEM rule development is a repeatable engineering practice that balances precision and coverage while minimizing alert noise and operational cost.

Why precise SIEM rule design matters for enterprise security

High quality SIEM rules turn raw telemetry into actionable incidents. Poorly authored rules generate alert storms that drown analysts and hide real compromises. Precision matters for three outcomes that enterprise security teams measure. First, signal to noise ratio controls analyst productivity. Second, contextual accuracy reduces investigation time by providing the right attribution and enrichment at alert creation. Third, performance and scalability determine whether the rule can run across high volume telemetry without degrading ingestion or search latency. Organizations that treat SIEM rule writing as detection engineering deliver faster containment and improved outcomes for incident response.

Core principles for writing effective SIEM rules

1. Start with a clear detection objective

A rule must answer a single question about attacker behavior. Define the threat scenario the rule is intended to catch. Specify adversary goal, common tactics, observable events and the minimum conditions that justify an alert. Examples of clear objectives include catching unusual authentication patterns that suggest credential misuse or identifying data exfiltration over uncommon protocols. Avoid rules that attempt to solve multiple unrelated detections in one logical expression.

2. Focus on fidelity and context

High fidelity means the rule produces alerts that are highly likely to represent true malicious activity. Add contextual signals such as user risk score, device criticality, geolocation anomalies and threat intelligence indicators to raise fidelity. Enrichment at detection time reduces the triage steps analysts must perform after the alert fires.

3. Define measurable success criteria

Each rule should have quantitative targets for precision and recall or at minimum for precision and alert volume. Establish baseline metrics before tuning and set thresholds that trigger a rule review. These metrics inform when to retire, refine or expand detection coverage.

4. Design for performance and scale

Rules must be optimized to run in the SIEM query engine without creating excessive resource use. Use indexed fields and narrow time windows where possible. Prefer streaming detection patterns or incremental aggregation over full historical scans when the platform supports them. Avoid expensive regex across high cardinality fields unless prefiltered.

5. Plan for operational tuning and lifecycle

Tuning is continuous. Create a repeatable process for backtesting, false positive review and promotion to production. Version control rule content and keep change logs for auditing. Integrate feedback from analysts to close the loop on rule usefulness.

Step by step process to author a SIEM rule

Define the threat hypothesis

Document the attack vector, attacker objective and list of observable indicators. Use threat model frameworks such as MITRE ATT&CK to map tactics and techniques to telemetry you own. This creates a testable hypothesis for detection.

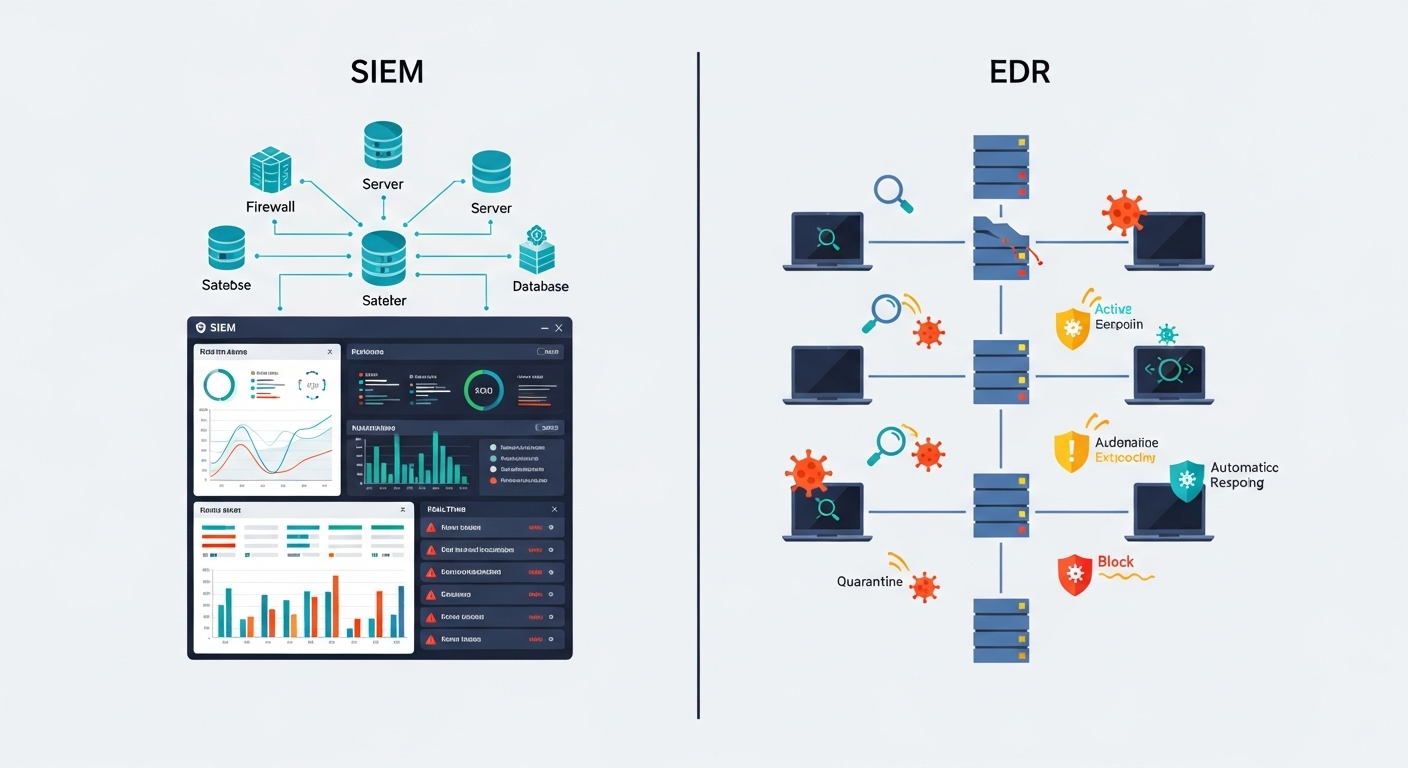

Identify required telemetry

Confirm the SIEM receives the necessary logs and fields. Examples include process creation, command line, network flows, authentication logs and EDR alerts. If telemetry is missing, flag data gaps for your observability team or vendor integration such as CyberSilo services.

Choose a detection technique

Select rule type such as indicator match, anomaly detection, behavioral correlation, threshold crossing or pattern sequencing. The choice depends on the signal quality and expected false positive tolerance.

Write the core logic

Construct a minimal query that implements the hypothesis. Use indexed fields, time constraints and enrichment joins. Document the assumptions and include example event samples the rule should match.

Enrich and contextualize

Add user, asset and threat intelligence enrichment. Correlate events from multiple sources to reduce noise. Where available, leverage product features in Threat Hawk SIEM for automated enrichment and context linking.

Test and backtest

Run the rule against historical windows and synthetic data. Record true positives and false positives. Tuning iterations should reduce false positives without eroding coverage.

Deploy with canary and monitor

Deploy initially to a subset of assets or as a low severity alert. Monitor metrics and analyst feedback for a defined evaluation period then escalate to production severity if targets are met.

Maintain and retire

Review rule performance quarterly or after major environment changes. Retire rules that underperform or replace them with better detections. Keep a changelog and link alerts to playbooks for consistent response.

Common detection patterns and templates

Below are repeatable rule patterns with their typical telemetry and tuning notes. Use these templates as starting points and adapt field names to your data model.

Writing robust rule logic without vendor lock

Express detection logic in a platform agnostic way where practical. Define inputs and outputs using your canonical schema names. Create translation guides that map canonical fields to vendor specific names. Maintain a library of rule templates and test harnesses so rules can be ported between SIEMs or executed in supplementary analytics platforms. When using product specific features such as fast indexed joins or multi stage detection pipelines document dependencies so rules remain auditable.

Testing, validation and continuous tuning

Backtesting with historical data

Run new rules against representative historical windows that contain both normal activity and known incidents. Measure detection rate and false positive rate. Use labeled incidents where available or inject curated test incidents that mimic attacker behavior. Document test results and expected outcomes for each rule iteration.

Automated synthetic validation

Create synthetic event generators that exercise edge cases detection logic. This helps validate both the rule and any enrichment pipelines. Synthetic test results should be part of the CI pipeline for rule deployment where the SIEM supports automated testing.

Operational feedback loop

Embed a simple mechanism for analysts to mark alerts as true positive false positive or unknown. Aggregate this feedback into tuning sprints. Continuous feedback reduces alert volume and keeps detections aligned with evolving environment and attacker tactics.

Performance and scalability considerations

High volume environments demand rules that scale. Use these techniques to keep rule impact low. First, prefer indexed fields in where clauses. Second, use narrow time windows and incremental aggregation. Third, avoid global scans by scoping rules to asset groups when appropriate. Fourth, offload heavy enrichment to background pipelines and persist enrichment state so real time rules apply lookups rather than heavy joins. Finally, monitor rule runtime and resource use and create alerts when a rule exceeds expected cost metrics.

Operational tip: treat each rule like a production service. Track runtime resource usage and create guardrails so a poorly written rule cannot affect ingestion or searches. Regularly schedule performance reviews and capacity testing as part of rule governance.

Operationalizing rules and integrating with SOC workflows

Detect to response is a pipeline. Every rule must map to a response action and a triage playbook. Link rule alerts to playbooks that contain analyst steps, evidence to collect and remediation guidance. Integrate with orchestration platforms to automate low risk containment tasks and free analyst time for complex investigations. Rules should include severity classifications, suggested analyst tasks and enrichment fields that populate incident records to reduce manual lookups.

Governance, auditing and lifecycle management

Change control and versioning

Use a formal change control process for rule changes. Store rules in version control with clear commit messages and testing artifacts. Keep a change log that lists author, reviewer and reason for change. For high risk rule changes require peer review and a rollback plan.

Audit trails and compliance

Maintain auditable records that show when a rule was added changed or removed and who approved it. This is essential for regulated environments and for post incident reviews. Ensure the SIEM retains historical versions of executed queries and any enrichment transformations applied to evidence used in investigations.

Measuring rule effectiveness

Track metrics that quantify detection quality and operational cost. Typical metrics include detection precision, detection recall when labeled incidents are available, mean time to detect, analyst triage time per alert and alert volume per analyst per day. Use dashboards to track trends and set alerting thresholds that trigger rule review when performance drifts.

Real world deployment checklist

- Confirm telemetry availability and field mappings

- Validate detection objective and map to ATT&CK technique

- Write minimal core query then add enrichment

- Backtest across representative historic windows

- Deploy as low severity or canary for evaluation period

- Collect analyst feedback and iterate

- Version control and document all changes

- Retire or replace rules that underperform

Further resources and next steps

If your team needs hands on assistance operationalizing these practices consider vendor or consultancy partnerships for detection engineering. Explore curated rule libraries and SIEM comparative reviews to align capabilities with your needs such as our analysis in the Top 10 SIEM tools review. For platform specific optimization consider product features in Threat Hawk SIEM that accelerate enrichment and rule orchestration. If you would like a technical review of rule performance or help with deployment plans please contact our security team to schedule an assessment. For general inquiries about our methodology or services visit CyberSilo where we publish detection guidance and managed service offerings.

Final recommendations

Treat SIEM rule writing as detection engineering not a one time activity. Define clear objectives test rigorously and instrument rule performance. Use enrichment to deliver context and reduce analyst effort. Maintain governance and lifecycle processes so the detection estate adapts as the threat landscape and enterprise environment evolve. When teams combine disciplined rule design with automated testing and operational feedback they close the gap between raw telemetry and timely containment.