Splunk SIEM is a commercial platform that implements Security Information and Event Management capabilities by ingesting log and event data across the infrastructure then applying indexing, analytics, correlation and case management to detect, investigate and respond to threats at scale. In practice Splunk provides a pipeline for data collection and normalization, a search and analytics engine that powers investigations and dashboards, and integrations that enable automated response and orchestration. This article explains how Splunk SIEM works from data ingestion through detection and response, presents architectural and operational guidance for large enterprise deployments, compares functional capabilities, and maps practical migration and optimization steps for security operations teams.

What Splunk SIEM actually is and what it delivers

At its core Splunk SIEM is an implementation of Security Information and Event Management built on top of a high throughput index and search architecture. It is designed to centralize machine data from logs, events, telemetry and packet metadata then provide the tools that security operations teams need to convert that data into intelligence. Key capabilities that define Splunk SIEM include log collection at scale, event parsing and normalization, indexed storage for fast search, correlation rules and analytics for detection, visualizations and dashboards for situational awareness, and workflows for incident investigation and response.

Core capability areas

- Data ingestion and normalization that supports diverse sources including network devices, endpoints, cloud services and applications

- Indexing and storage optimized for fast ad hoc search and historical analysis

- Search and analytics including a domain specific query language for correlation and pattern detection

- Pre built content and rule sets that accelerate detection coverage across common threat vectors

- Alerting and case management to track investigations and enable response handoffs

- Integration points with orchestration, endpoint detection response and cloud security tools

How Splunk fits into a modern security stack

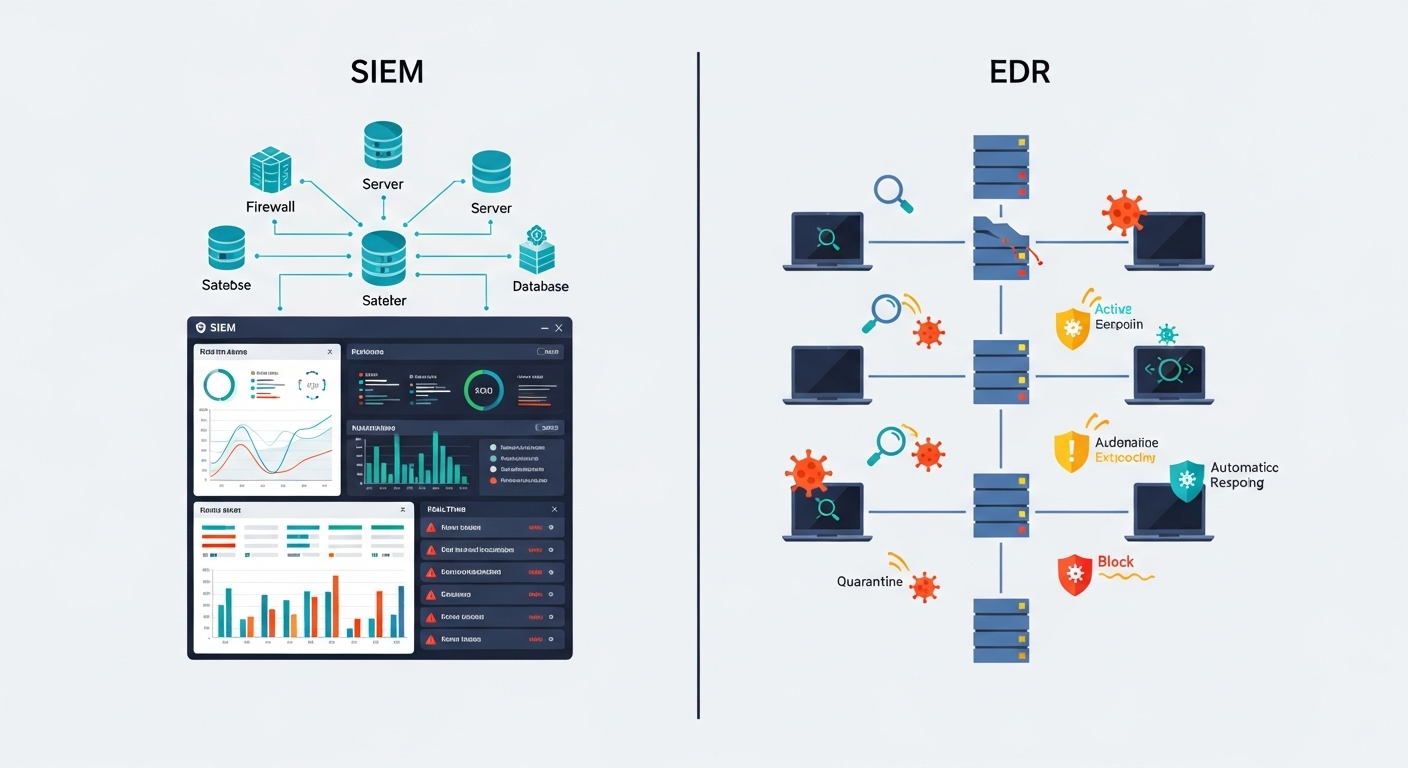

Splunk SIEM sits at the center of monitoring and detection. It ingests telemetry and events from security controls and infrastructure then feeds analytics engines, threat hunting workflows and SOAR playbooks. In enterprise environments it often integrates with endpoint detection platforms, network sensors, cloud security posture tools, identity and access logs and threat intelligence feeds. Those integrations enable Splunk to correlate identity context with endpoint telemetry and network flows to reveal sophisticated attack patterns that single sensors cannot detect on their own.

Architecture and key components

Understanding how Splunk processes data requires a view of its component architecture. The core components that drive the SIEM experience are data collectors, forwarders, indexers and search heads. Each component has a clear role in moving data from origin to insight.

Data collectors and forwarders

Data collection begins with forwarders deployed near sources. Forwarders can be lightweight agents installed on hosts, syslog collectors, or cloud connectors for services and APIs. Their job is to gather raw logs then apply minimal preprocessing such as compression and buffering before securely transmitting data to indexers. Reliable delivery and efficient transport are fundamental because data loss or excessive latency reduces detection fidelity.

Indexers and storage

Indexers receive event streams then parse and index the data across multiple buckets. Indexing transforms raw events into searchable records with time based keys and searchable fields. The indexing layer is engineered to support high velocity ingestion and parallel search across large data sets. Storage architectures vary from local high performance disks to distributed object storage depending on retention and cost objectives.

Search heads and analytics

Search heads coordinate queries across indexers and present results to analysts. They host the search processing language and manage saved searches, dashboards and correlation rules. For enterprise scale deployments search heads can be clustered for high availability and to distribute application logic for faster user experience.

Apps, content and extensions

Splunk supports a modular ecosystem of apps and add ons that provide data models, dashboards and detection logic for specific technologies. Security analytics applications augment the baseline with pre built correlation searches, field extractions, lookups and threat intelligence integration that expedite detection and compliance programs.

When designing Splunk SIEM for enterprise use prioritize data fidelity, retention policies aligned with detection use cases, and indexing strategies that enable rapid searches across long windows. Poorly planned ingestion can cause high storage costs or slow searches which directly impact mean time to detect.

How Splunk SIEM works step by step

The operational flow in Splunk SIEM can be expressed as a sequence of steps from data acquisition to automated action. The following process list outlines a practical end to end flow that SOC teams execute daily.

Instrument sources

Deploy forwarders, API connectors and collectors to capture logs from endpoints, network devices, cloud services and applications. Establish schemas and timestamp synchrony to ensure events can be correlated across sources.

Normalize and index

Apply field extraction and enrichment to normalize events. Index events to create searchable records with persistent fields for identity, host, process and network context.

Enrich with threat intelligence

Correlate incoming events with threat intelligence feeds and internal asset information to add risk context. Tag indicators with confidence and source metadata to support prioritization.

Run detection analytics

Execute correlation searches and thresholds that identify patterns ranging from simple indicators to advanced techniques over extended time windows. Use machine learning analytics to detect anomalies against baselines.

Alert and score

Generate alerts with severity and risk scores. Enrich alerts with contextual artifacts and pre calculated entities to accelerate triage. Route alerts to SOC consoles and case management systems.

Investigate with context

Analysts pivot across logs, asset inventories and historical events. Visualizations and timeline views help reconstruct attack chains and identify root causes.

Respond and automate

Execute manual mitigation steps or trigger automated playbooks that contain the incident. Integrate with orchestration platforms to isolate hosts, block network flows and push endpoint actions.

Measure and refine

Track detection coverage, false positive rates and mean time to detect and respond. Iterate on rules, enrichment and data coverage to reduce noise and increase detection fidelity.

Data ingestion patterns and parsing strategies

Effective ingestion is more than collecting every log. It requires identifying which sources and event types are critical for detection, setting appropriate retention and configuring parsers that extract meaningful fields. Splunk supports several ingestion patterns that match different operational needs.

Push versus pull

In agent based deployments forwarders push data to indexers or intermediate relays. In cloud native scenarios connectors pull data from APIs. Choose push for low latency and guaranteed delivery and pull when APIs provide structured and filtered records.

Structured and unstructured data

Many modern sources produce structured JSON that is easy to map to fields. Legacy systems and custom applications often emit free form logs. Invest in extraction rules and lookups to normalize unstructured events into consistent fields used by correlation rules and dashboards.

Envelope scaling and metadata

Preserve original timestamps and source metadata by capturing envelope attributes such as host, source type, application and arrival time. These attributes are central to fast filtering and reduce ambiguous matches during correlation.

Ingest only what you need at full fidelity. Use sampling for noisy telemetry when historical aggregation is sufficient rather than indexing every record. This approach preserves index performance and reduces cost without sacrificing detection.

Detection engineering in Splunk

Detection engineering turns threat hypotheses into reproducible searches and alerts. Splunk enables this through a combination of search language capabilities, scheduled correlation searches and machine learning toolkits.

Search processing language and correlation rules

The Splunk query language supports joins, sub searches, statistical aggregation and time window correlation. Detection engineers convert tactics, techniques and procedures into search patterns that map to observable behaviors. Effective rules include an assertion of normal behavior, a detection condition and a response or enrichment step.

Anomaly detection and machine learning

In addition to signature like rules, Splunk provides machine learning toolkits to identify statistical deviations and unusual sequences. Models can profile network baselines or user behavior then surface outliers that may indicate compromise. Machine learning reduces maintenance overhead for noisy conditions that do not have simple deterministic signatures.

Testing, tuning and risk scoring

Every detection rule must be tested against historical data sets to estimate false positive rates and detection yield. Integrate risk scoring into alerts so analysts can prioritize high impact incidents. Automated tuning pipelines can disable or adjust noisy rules based on feedback from event adjudication.

Use cases and real world scenarios

Splunk SIEM supports a broad set of use cases across security and compliance. Below are representative scenarios where Splunk adds measurable value for enterprise security programs.

Threat detection and hunting

Using correlated telemetry Splunk can detect lateral movement, credential abuse and data exfiltration. Threat hunters leverage historical queries and entity centric views to identify stealthy adversaries that evade rule based detection.

Incident response and forensic reconstruction

When an incident occurs Splunk provides a timeline of events correlated to assets and user accounts. Investigators can pivot from alerts to raw logs, extract indicators of compromise and generate containment actions that are repeatable and auditable.

Compliance and audit reporting

Many compliance frameworks require centralized logging and retention. Splunk simplifies compliance evidence collection by providing dashboards and saved searches that demonstrate controls and alerting coverage over time.

Deployment models and scaling considerations

Enterprise deployments must choose between cloud, on premise and hybrid models. Each model influences operational overhead, compliance posture and cost.

Cloud native

Cloud deployments offer elasticity and reduce the operational burden of hardware. They simplify scaling for burst ingestion but require attention to data residency and regulatory constraints.

On premise and hybrid

On premise deployments give full control over data sovereignty and network isolation. Hybrid models combine cloud for long term retention with on premise indexers for low latency investigative work. Consider network topology and replication when designing hybrid architectures.

Sizing for retention and search performance

Plan index capacity based on expected daily ingestion rates, retention windows and index replication. Longer retention windows increase storage costs but enable threat hunting over extended periods. Ensure search head capacity scales with concurrent analyst workloads to preserve interactive performance.

Integration and ecosystem

Splunk becomes more powerful when integrated with the broader security ecosystem. Common integrations add depth to alerts and enable automated mitigations.

Integration patterns

- Threat intelligence feeds added as lookups for indicator enrichment

- Endpoint detection platforms pushing detailed telemetry to Splunk for correlation

- Cloud service providers delivering audit logs via connectors

- SOAR and orchestration platforms receiving alerts for automated playbooks

APIs and automation

Splunk provides REST APIs to query data, manage alerts and automate operational tasks. SOC teams use APIs to integrate Splunk with ticketing systems, orchestration engines and internal dashboards. Automation reduces manual toil and improves the speed of containment.

Comparative capabilities

Enterprises considering Splunk often evaluate it against alternatives. The following data table highlights key capability dimensions to compare. This table is built using div elements to ensure compatibility with responsive layouts.

Cost model and licensing overview

Understanding cost drivers is essential for predictable budgeting. Splunk licensing models historically based on ingest volume but they also provide capacity based approaches depending on the deployment. Key cost factors include daily ingest rates, retention times, index replication, search head clusters, and application licensing for premium modules.

Cost management strategies

- Prioritize high fidelity retention for data that directly supports detection and investigation

- Use cold storage tiers for older data that is rarely queried but must be retained for compliance

- Apply sampling or aggregation to noisy telemetry where full fidelity is not required

- Negotiate committed usage and review licensing tiers to match expected growth and burst patterns

Operational maturity and governance

Successful SIEM deployments require programmatic governance that includes operational processes, detection engineering discipline and continuous improvement cycles. Treat Splunk as a living platform that requires stewardship in the same way as critical infrastructure.

Key operational roles

- Platform engineers who manage ingestion pipelines, indexing and infrastructure scaling

- Detection engineers who author and maintain correlation searches and analytic models

- SOC analysts who triage and investigate alerts and perform threat hunting

- Incident responders who own containment and remediation coordination

- Governance owners who track compliance and retention policy adherence

KPI driven metrics

Measure the effectiveness of your deployment with concrete KPIs. Examples include mean time to detect, mean time to respond, number of high fidelity detections per period, false positive rates, and analyst efficiency metrics such as alerts per analyst and time per investigation. These metrics drive investments into data coverage and automation.

Correlate SIEM operational metrics with business outcomes. Improvements in mean time to detect and reduced breach dwell time translate directly into lower incident cost and reduced risk to critical systems.

Migration and modernization best practices

Organizations often migrate to Splunk from legacy systems or consolidate multiple monitoring solutions. A staged migration reduces risk and enables iterative validation.

Migration steps

Assess current state

Catalogue existing log sources, retention policies and detection content. Determine which sources provide high value for detection and which can be deprioritized.

Define target architecture

Choose cloud or on premise and design index capacity, search head sizing and replication strategies. Establish data lifecycle policies for hot warm and cold tiers.

Pilot with high value sources

Onboard a subset of critical log sources to validate parsing rules and detection content. Use the pilot to tune alerts and optimize storage.

Migrate detections and workflows

Recreate detection logic in the new environment and validate detection outcomes against historical incidents. Migrate automation playbooks and case management integrations.

Operationalize and iterate

Transition monitoring to the platform team and implement continuous tuning pipelines that adjust rules based on analyst feedback and false positive trends.

Advanced topics and optimization techniques

For mature deployments there are advanced tactics that improve detection coverage and reduce operational cost. These optimizations often require coordination between platform, network and security teams.

Entity centric data models

Model users and hosts as first class entities with associated attributes and histories. Entity centric approaches enable faster pivoting during investigations and reduce false positives by correlating behavior across related objects.

Adaptive sampling and retention

Implement tiered retention with policies that move data into cheaper storage as it ages. Use adaptive sampling on chatty sources and retain full fidelity only when triggered by a relevant event or investigation.

Detection lifecycle automation

Create feedback loops where analyst adjudications automatically adjust rule thresholds or disable noisy detections. Automate suppression windows for maintenance periods to reduce alert storming during planned changes.

When to consider Splunk and when to explore alternatives

Splunk is a strong fit for organizations that require deep analytics, broad ecosystem integration and enterprise scale. Consider alternatives if your environment demands a simpler managed solution with lower operational overhead or if ingest characteristics are modest and cost sensitivity is extreme. For teams evaluating market options review detection coverage, ease of integration, total cost of ownership and vendor support models.

Practical recommendations for enterprises

Operational success with Splunk SIEM depends on three pragmatic choices. First choose a deployment architecture that supports your retention and sovereignty requirements. Second focus initially on high value telemetry and progressively onboard additional sources. Third invest in detection engineering and automation so alerts map to actionable investigations.

Checklist for first 90 days

- Deploy forwarders on critical systems and validate timestamp accuracy

- Onboard identity and endpoint telemetry as priority sources

- Deploy a set of baseline detection rules and tune thresholds using a pilot data set

- Integrate at least one threat intelligence feed for indicator enrichment

- Establish incident workflows that route high severity alerts into case management

Where to get help and additional resources

Adopting and optimizing a SIEM platform is a multidisciplinary effort. If your team needs architect level assistance or managed services consider reaching out to experienced providers that can accelerate deployment and drive operational maturity. Explore vendor resources and technical blogs for recipe like guides to common integrations and detection content. For a broader view of market options review comparative analyses and top tool roundups that map capabilities to enterprise requirements.

For organizations looking at alternatives and comparative lists consult the CyberSilo deep dive on top SIEM tools to understand how Splunk compares to other offerings and to identify the right fit for your environment. If you want to evaluate an enterprise grade SIEM built for intensive security operations consider the Threat Hawk SIEM solution that blends detection content with managed services and integration support. For hands on guidance and to discuss architecture and deployment options contact our security team to schedule a technical review and readout.

CyberSilo maintains an ecosystem of resources for security leaders evaluating SIEM investments. Use those materials to align technical selection with business risk and compliance objectives. When your team is ready deploy a pilot that focuses on critical detection use cases and measure outcomes against defined KPIs.

Conclusion and next steps

Splunk SIEM is a capability rich platform that enables enterprises to collect vast amounts of telemetry then apply correlation, analytics and orchestration to detect and remediate threats. Success requires careful planning around data ingestion, indexing and detection engineering. Architect for scale and retention, prioritize high value sources, and invest in automation to reduce manual work for analysts. For more detailed vendor comparisons and tactical guidance review the CyberSilo materials and the top SIEM tools analysis. If you want tailored assistance to design a Splunk deployment or to understand how Threat Hawk SIEM can complement your security operations please contact our security team for a consultation. To learn more about our services and how we help organizations accelerate SIEM value visit CyberSilo and review the Threat Hawk SIEM solution pages and reach out via the contact our security team page to begin a technical assessment.

For teams that want a hands on migration path follow an iterative pilot to validate parsing rules, detection logic and retention policies before rolling out enterprise wide. Track KPIs such as mean time to detect and mean time to respond and use those metrics to justify further investments. Finally ensure governance and operational ownership are in place so that Splunk SIEM continues to deliver value over time.

For additional reading and tool selection guidance review the top SIEM tools comparison on the CyberSilo site and engage with the Threat Hawk SIEM team to design a solution that aligns with your threat model and operational priorities. If you are ready to begin our team stands ready to help so please contact our security team to schedule an assessment and roadmap session.